|

Archive: March 8, 2025

Archive: March 8, 2024

Archive: March 8, 2023

|

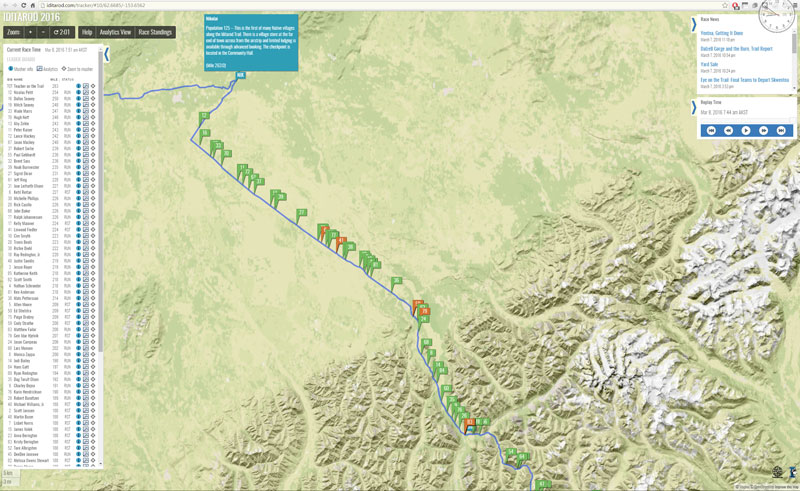

It's day three of the 2023 Iditarod, which means ... it's time to take the day off! Everyone is seemingly taking their manditory 24-hour rest now (view of Flow Tracker):

Well everyone except for Wade Mars; he's shown as being in first, yay, moving from Ophir to Iditarod, where he will likely stop and take his 24. The strategies here are most interesting; Nic Petit is actually (I think) in first place moving from Nikolai where he has already completed his 24. (I highlighted him in the screenshot above by hovering over his name.) I think by the time he reaches Iditarod - likely, sometime late tonight - he will pass Wade and take over the lead. That's 70 miles for him - probably two runs - and will take him about 12 hours.

On the way he will pass Ryan Redington, Richie Diehl, and a host of others doing their 24s in Takotna, and then Jessie Holmes and Brent Sass, who are sitting 2nd and 3rd doing their 24s in Ophir. My prediction from speeds and timing is that when everyone is running through Iditarod, the order will be: Nic, Brent, Jessie, Ryan, and Richie, in that order. I think Pete Kaiser and Matt Failor are also in the mix, as are Eddie Burke (!) and Mille Porsild (yay), with Hunter Keefe in 10th. We'll check on this :)

The key factor seems to be the heat; many mushers are wanting to rest now with cooler weather predicted ahead. Trail conditions so far have been good. If it stays warm(er) it will make the Yukon River and then Norton Sound tougher, but we'll see. A lot of racing left.

Some pics:

Go Mille + team!

|

Archive: March 8, 2022

Archive: March 8, 2021

Archive: March 8, 2020

|

A filter pass after a longish quietish weekend ... for once it seems it is *not* all happening (but some things are anyway...)

Did you watch the Iditarod start? How about the restart? (The "real" start?) Well they're off, into heavy snow, and good luck to them. Everyone is predicting a snowy, sloggy, and slow race. For a taste of what it's like, check out this cool video of rookie Quince Mountain's prerace ride. Did you watch the Iditarod start? How about the restart? (The "real" start?) Well they're off, into heavy snow, and good luck to them. Everyone is predicting a snowy, sloggy, and slow race. For a taste of what it's like, check out this cool video of rookie Quince Mountain's prerace ride.

And for those of you who are interested, the Iditaflow tracker is up and running...

Congrats to SpaceX for their 50th landing of a first stage booster. Wow. I can remember the first one and I do believe I've watched them all. Onward! Congrats to SpaceX for their 50th landing of a first stage booster. Wow. I can remember the first one and I do believe I've watched them all. Onward!

This looks super cool: In the ingenious game Lightmatter, lights do matter...because the shadows will kill you. Sounds like a great premise. I must admit, I try these games and they hold my interest for like 15 minutes and then poof! I'm on to the next thing. But I will try it and YMMV. This looks super cool: In the ingenious game Lightmatter, lights do matter...because the shadows will kill you. Sounds like a great premise. I must admit, I try these games and they hold my interest for like 15 minutes and then poof! I'm on to the next thing. But I will try it and YMMV.

Another awesome infographic from Visual Capitalist: the World's Highest Mountains and what their names mean. Speaking of the Iditarod, the highest mountain in North America is Mt. Denali (formerly Mt. McKinley), and Denali means ... "high mountain" in Athabaskan :)

Yeah, MVPs are hard. "We're so biased it's scary." Yeah, MVPs are hard. "We're so biased it's scary."

Coronavirus update: we now have 109K cases, and 3.8K people have died. I think in the near future the rate of new cases will increase because a lot of people are now being tested. But at the same time, the mortality will decrease because of many mild undiagnosed cases.

Peter Thiel: The new Atomic Age we need. "The single most important action we can take is thawing a nuclear energy policy that keeps our technology frozen in time. If we are serious about replacing fossil fuels, we are going to need nuclear power, so the choice is stark: We can keep on merely talking about a carbon-free world, or we can go ahead and create one." I wish all those who worry about climate change would embrace nuclear energy as the way out.

Did you know? U.S. led all countries in reducing CO2 emissions in 2019. Ah, the things *they* don't tell us...

|

Archive: March 8, 2019

Archive: March 8, 2018

Archive: March 8, 2017

Archive: March 8, 2016

|

This morning we recap Iditarod 2016 day 2, as "the peleton" make their way up the Alaska Range to Rainy Pass. The leaders have already started down the backside, through the most treacheous sections of the race, Dalzell Gorge, and then Farewall Burn. This morning we recap Iditarod 2016 day 2, as "the peleton" make their way up the Alaska Range to Rainy Pass. The leaders have already started down the backside, through the most treacheous sections of the race, Dalzell Gorge, and then Farewall Burn.

[at left, Nicolas Petit and team]

Leader Nicholas Petit has nearly made it to Nikolai (#12 on the map below), closely followed by defending champion Dallas Seavey (#16) and his father, four-time champion Mitch Seavey (#19). Wade Mars, Hugh Neff, Peter Kaiser, perennial contender Aliy Zirkle, and brothers Lance and Jason Mackey complete the top ten. No surprises so far. Many of these teams will stop and take their 24-hour rest in Nikolai. Leader Nicholas Petit has nearly made it to Nikolai (#12 on the map below), closely followed by defending champion Dallas Seavey (#16) and his father, four-time champion Mitch Seavey (#19). Wade Mars, Hugh Neff, Peter Kaiser, perennial contender Aliy Zirkle, and brothers Lance and Jason Mackey complete the top ten. No surprises so far. Many of these teams will stop and take their 24-hour rest in Nikolai.

[at right, Dallas Seavey and team]

The reports are that the Dalzell Gorge is in much better shape this year than it was in 2014, a year when many competitors wiped out there, and more than a few had to drop out afterward. Plenty of snow and good trails. The reports are that the Dalzell Gorge is in much better shape this year than it was in 2014, a year when many competitors wiped out there, and more than a few had to drop out afterward. Plenty of snow and good trails.

[at left, Dalzell Gorge trail]

Similarly for the Farewell Burn, a desolate area in the middle of nowhere where you are surrounded by the ghosts of old trees burned by a fire. Similarly for the Farewell Burn, a desolate area in the middle of nowhere where you are surrounded by the ghosts of old trees burned by a fire.

[at right, Farewall Burn...]

Race veterans say if you can make it to Nikolai, you can make it to Nome. Of course there are still 700 miles to go, long cold stretches along the Yukon River, and icy wind along the coast of Norton Bay. The possibly of dogs encountering moose. And the ever-present possibility of bad weather. Race veterans say if you can make it to Nikolai, you can make it to Nome. Of course there are still 700 miles to go, long cold stretches along the Yukon River, and icy wind along the coast of Norton Bay. The possibly of dogs encountering moose. And the ever-present possibility of bad weather.

[at left, sunset coming into Nikolai]

[The pictures are from the amazing Sebastian Schnuelle, a successful musher in his own right who is following and blogging the race, and taking a bunch of great pictures. Be sure to follow him on the Iditarod website.]

Onward to Nome!

(click to enbiggen amazingly)

[All Iditarod 2016 posts]

|

|

Ha ... did I say I had recovered from my cold? Kidding. Precelebration is the root of all failure. Anyway, yesterday was one of those days; I spent the whole day refactoring some code to make it better, only to realize at the end that it was not actually better. This morning I'm back to the code as it was on the weekend. Sigh. I have been enjoying the Iditarod so far, as always (go DeeDee!), and am now deep into the mysteries of SVG ... and making a filter pass. Ha ... did I say I had recovered from my cold? Kidding. Precelebration is the root of all failure. Anyway, yesterday was one of those days; I spent the whole day refactoring some code to make it better, only to realize at the end that it was not actually better. This morning I'm back to the code as it was on the weekend. Sigh. I have been enjoying the Iditarod so far, as always (go DeeDee!), and am now deep into the mysteries of SVG ... and making a filter pass.

So this is cool: MIT scientists stunned by scalable quantum computer. Apparently they have now made five "qubits". I remain skeptical about quantum computing, but then, Einstein was skeptical about quantum mechanics, too. Worth watching.

Apropos: this McKinsey report (!): the growing potential of quantum computing.

Not all research is equally cool: this paper appears to imply glaciers have gender. Wow, that's about all I can say. You almost think this must be a hoax, but then you realize, as a hoax it would be too obvious.

Here we have Ice fishing in one of the world's coldest cities (Astana, Kazakhstan). I've been to Astana, but fortunately it was in Spring. Astana is not only the coldest capital city in the world ... it is also one of the warmest. I guess the weather in the Kazahkstanian steppes is ... variable. A good place to be a climatologist! (of any gender :) Here we have Ice fishing in one of the world's coldest cities (Astana, Kazakhstan). I've been to Astana, but fortunately it was in Spring. Astana is not only the coldest capital city in the world ... it is also one of the warmest. I guess the weather in the Kazahkstanian steppes is ... variable. A good place to be a climatologist! (of any gender :)

Brad Feld with an interesting rumination: No one gets out of this alive. (Where by "this" he means "life".) "While I fantasize about the singularity and hope I live long enough to have my consciousness uploaded into something that allows me to continue to engage indefinitely, even if it’s a simulation of mortality, I accept the reality that life is finite." So far ... :)

The glasses-free technology that made me believe in 3D TV again. The human factor here is pretty important. After watching Avatar I was convinced 3D would take over, but it hasn't, not even in movies, and I think that's because of the glasses. The same problem that will afflict VR. The glasses-free technology that made me believe in 3D TV again. The human factor here is pretty important. After watching Avatar I was convinced 3D would take over, but it hasn't, not even in movies, and I think that's because of the glasses. The same problem that will afflict VR.

Speaking of VR: Leap Motion's Minority Report -style gesture controller gets smarter, faster, and more accurate. This is way cool, the input side of the VR equation is just as important as the output side. Speaking of VR: Leap Motion's Minority Report -style gesture controller gets smarter, faster, and more accurate. This is way cool, the input side of the VR equation is just as important as the output side.

And more speaking of VR (and more Brad Feld): Mercy Hospital virtual care center supported by Oblong. Remote healthcare is one of the most compelling applications for AR/VR, removing the barriers of space and time to bring expertise to people who need it. Oblong was founded by the people who made Minority Report, and began as a company making gesture controllers; their Mezzanine product now drives rooms with the walls covered in monitors, and supports wands as well as gloves. Much better for business and medical settings where people are not going to wear goggles or gloves. And more speaking of VR (and more Brad Feld): Mercy Hospital virtual care center supported by Oblong. Remote healthcare is one of the most compelling applications for AR/VR, removing the barriers of space and time to bring expertise to people who need it. Oblong was founded by the people who made Minority Report, and began as a company making gesture controllers; their Mezzanine product now drives rooms with the walls covered in monitors, and supports wands as well as gloves. Much better for business and medical settings where people are not going to wear goggles or gloves.

All of which goes to show, you need more NASA technology in your life. A nicely done post on the NASA tumblr which shows off some of the tech developed for space which later made it into our lives (and no, Tang is not included :) I think the ongoing research on human bodies and how they are affected by space is some of their most important work. All of which goes to show, you need more NASA technology in your life. A nicely done post on the NASA tumblr which shows off some of the tech developed for space which later made it into our lives (and no, Tang is not included :) I think the ongoing research on human bodies and how they are affected by space is some of their most important work.

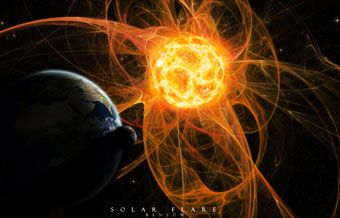

NASA also do a lot of research on Solar storms...

|

Archive: March 8, 2015

For you #Iditarod fans out there, here's an index of my 2015 Iditarod posts:

Onward!

|

Archive: March 8, 2014

|

Good morning all; while you were sleeping Aliy Zirkle has taken the lead in the 2014 Iditarod, mushing past Martin Buser while he rested in Kaltag. They are now headed toward Unalakleet, and as you can see below it is a close race.

To orient you, the teams are headed West (<-) toward Norton Sound on the Bering Sea; the finishing town of Nome is behind the leaderboard at the upper left. The blue labels are checkpoints; UNA = Unalakleet, KAL = Kaltag, and NUL = Nulato. #10 is Aliy Zirkle, now in the lead, followed by #36 Martin Buser, #29 Nicholas Petit (!), and #14 Dallas Seavey. A team's tag turns Orange when they are not moving; resting in a checkpoint.

I've been following the Iditarod for several years now and I must tell you, this is the most exciting race I've seen. There are ten teams within a few hours of each other with 300 miles to go. Onward!

(All Iditarod 2014 posts)

|

|

The 2014 Iditarod continues its record-breaking fast pace over hardpack and dirt, as the leaders are now through Unalakleet and off across Norton Sound to Shaktoolik. Each team rested for a while in the heat of the afternoon, and of course there's some strategy involved; leave earlier and save time or leave later and go faster. Aliy Zirkle was the first to go, with Martin Buser 52 minutes behind, and Sonny Lindner 1:20 behind. Jeff King took off fourth, trailing by 2:50, and Aaron Burmeister fifth, needing 3:40. Those are the teams that can win, with about 300 miles to go. The 2014 Iditarod continues its record-breaking fast pace over hardpack and dirt, as the leaders are now through Unalakleet and off across Norton Sound to Shaktoolik. Each team rested for a while in the heat of the afternoon, and of course there's some strategy involved; leave earlier and save time or leave later and go faster. Aliy Zirkle was the first to go, with Martin Buser 52 minutes behind, and Sonny Lindner 1:20 behind. Jeff King took off fourth, trailing by 2:50, and Aaron Burmeister fifth, needing 3:40. Those are the teams that can win, with about 300 miles to go.

At right, Aliy Zirkle and team (11 dogs)

A lot will depend on the how rested each team are after their long trek between Kaltag and Unalakleet. Interesting that the lead group includes Martin Buser ("rest early"), Aliy Zirkle ("rest middle"), and Sonny Lindner and Jeff King ("rest late"), and that after seven days they are all within a few hours of each other. There's a lot of racing left and it will be a close finish. A lot will depend on the how rested each team are after their long trek between Kaltag and Unalakleet. Interesting that the lead group includes Martin Buser ("rest early"), Aliy Zirkle ("rest middle"), and Sonny Lindner and Jeff King ("rest late"), and that after seven days they are all within a few hours of each other. There's a lot of racing left and it will be a close finish.

At Left, Martin Buser and team (14 dogs)

Veteran musher Sebastian Schnulle isn't racing this year, he's blogging while leapfrogging the leaders via snowmobile, and he's been posting some great reports. Here's his view on the trail to Shaktoolik. "The trail into Shaktoolik is challenging. Leaving Unalakleet there is very little snow, sometimes none, sometimes a ribbon of ice." Whew. Veteran musher Sebastian Schnulle isn't racing this year, he's blogging while leapfrogging the leaders via snowmobile, and he's been posting some great reports. Here's his view on the trail to Shaktoolik. "The trail into Shaktoolik is challenging. Leaving Unalakleet there is very little snow, sometimes none, sometimes a ribbon of ice." Whew.

At right, Sonny Lindner and team (14 dogs)

Onward across Norton Sound!

(All Iditarod 2014 posts)

|

Archive: March 8, 2013

Archive: March 8, 2012

Archive: March 7, 2011

|

Back to normal - for real. Friday I noted I felt "back to normal", after a period of intense preparation for a couple of conferences. But today was the first Monday of normal. I celebrated by making a big schedule of possible travel for the coming year. Who needs normal? Back to normal - for real. Friday I noted I felt "back to normal", after a period of intense preparation for a couple of conferences. But today was the first Monday of normal. I celebrated by making a big schedule of possible travel for the coming year. Who needs normal?

This Russian powerplant is modern, yet it uses buttons and knobs and gauges. Is this safer? There is something satisfying about real knobs, right? When I was a kid I dreamed of someday owning a Marantz receiver, just because of the awesome knobs :) This Russian powerplant is modern, yet it uses buttons and knobs and gauges. Is this safer? There is something satisfying about real knobs, right? When I was a kid I dreamed of someday owning a Marantz receiver, just because of the awesome knobs :)

Exploring the moral universe of Wonkette. Scary.

So apparently too much technology has destroyed our ability to sleep. Oh, please.

Tesla's blog is back! And they've posted a quick update on the Model S. Yay! (Their blogging is welcome but a tad lame; if you're blogging about the Model S, show us a picture of the Model S!) Tesla's blog is back! And they've posted a quick update on the Model S. Yay! (Their blogging is welcome but a tad lame; if you're blogging about the Model S, show us a picture of the Model S!)

Bonus observation: car websites are among the most difficult to use, despite being among the most expensively crafted.

Philip Greenspun: Consumer Reports ranks automaker quality. "How did your $100 billion in tax dollar contributions work out? GM was near the bottom, with crummy cars that have average reliability. Chrysler was an outlier at the bottom, with off-the-chart bad test results and worse-than-average reliability. This could also serve as a scorecard for government industrial policy. The U.S. government has gone to extraordinary lengths to prop up GM and Chrysler, but their products remain uncompetitive." Although I am loathe to let the government off the hook, I think the unions are most to blame.

This video is most excellent, if I tell you it is a baby laughing at paper being torn, it won't do it justice. I also love Gerard Vanderleun's caption: New York Editorial Reader in Training :) This video is most excellent, if I tell you it is a baby laughing at paper being torn, it won't do it justice. I also love Gerard Vanderleun's caption: New York Editorial Reader in Training :)

The need to code. It is strong within some of us :)

Excellent! Real life version of UP! house attached to 300 balloons takes flight. No word on whether this was easier or less expensive than making an animated movie about it :) Excellent! Real life version of UP! house attached to 300 balloons takes flight. No word on whether this was easier or less expensive than making an animated movie about it :)

Onward!

|

Archive: March 8, 2010

|

"ten candidates"

and the winner is...

|

Archive: March 8, 2009

|

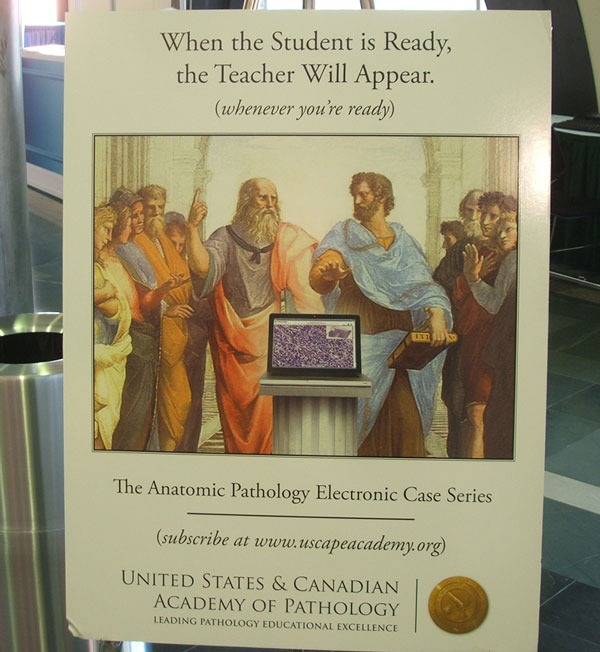

I’m attending the USCAP conference in Boston – many more pics and a report to follow – but today I was in the Exhibit Hall checking in with the Aperio team getting ready and I saw this poster in the entrance to the CME (continuing medical education) area:

How cool is that? USCAP are using Aperio's technology in their online CME! And if you visit the URL www.uscapeacademy.org, you’ll find a bunch of electronic case studies, presented with whole slide images using Aperio's ImageScope and WebScope as the viewers!

This is excellent. I must tell you, it gave me chills when I saw it. Our vision of transforming pathology is becoming a reality!

|

|

Ah, the life we lead... had a wonderful dinner tonight with good friends at Bricco, in the North End of Boston... beef carpaccio w parmesan cheese, zucchini blossoms stuffed with ricotta and infused with truffle oil, tortelli w pumpkin amaretti, accompanied by a bottle of the reliably amazing Tignanello... pretty much doesn't get much better. Thanks Greg! Ah, the life we lead... had a wonderful dinner tonight with good friends at Bricco, in the North End of Boston... beef carpaccio w parmesan cheese, zucchini blossoms stuffed with ricotta and infused with truffle oil, tortelli w pumpkin amaretti, accompanied by a bottle of the reliably amazing Tignanello... pretty much doesn't get much better. Thanks Greg!

How nice to just take a break; slept in and read for a bit (finished We might as well win, which ended as great as it started, highly recommended), walked around, shopped a little, and spent time in vacation mode before flipping the switch back into work mode. I am on negative time delivering some code and did make some progress... some. I'm here attending the USCAP Conference, and tomorrow it kicks off in earnest.

The weather in Boston is delightful - walked around in the sun, enjoyed the day, had a nice lunch on an outside patio - and everyone here is surprised; apparently this is a major departure from a crummy winter. Good timing I guess, what can I say, it has been delightful...

And so the Ole filter makes a right coast pass...

Philip Greenspun wonders Will turning the U.S. into Europe save our economy? This is one of those questions where if you have to ask, you already know the answer. The good news is that I doubt Americans will really stand for this; we'll tolerate a little now, because we're going through some pain, but as soon as it is obvious federalization makes things worse, the pendulum will start swinging back. I hope I'm wrong, but Obama already looks like a one term President.

Apropos: Meltdown. [ via Instapundit ]

PS John McCain thinks GM should be allowed to go bankrupt. Me, too. They've worked hard for it, they've earned it, and now they deserve it. Honestly, what bad thing will happen?

Maybe you don't have room in your house for a wine cellar, or wine room, and then maybe this is your solution! This spiral wine cellar will go anywhere, and they move the dirt straight out your front door. I love it! Maybe you don't have room in your house for a wine cellar, or wine room, and then maybe this is your solution! This spiral wine cellar will go anywhere, and they move the dirt straight out your front door. I love it!

Another object of desire: The Audi Shark Hovercraft. To the bat cave, Robin! Another object of desire: The Audi Shark Hovercraft. To the bat cave, Robin!

Excellent news: Richard Morgan has a new book out: Steel Remains. I am going to read it - via Kindle, of course - and you should too...

This totally misses the point: Why iPhone users won't flock to the Palm Pre come June. The Pre is not a better iPhone, it is a better Palm. There are a lot of us who want a real keyboard and who like Sprint better than AT&T, and we are the ones who will flock to the Palm Pre come June. I have my Pre-order in already, do you?

And so Italy are building the world's longest suspension bridge, two miles, across the Strait of Messina between Sicily and the mainland. Wow, you can believe this is pretty controversial. And so Italy are building the world's longest suspension bridge, two miles, across the Strait of Messina between Sicily and the mainland. Wow, you can believe this is pretty controversial.

|

Archive: March 8, 2008

|

At this point riding centuries is a bit old hat for me, so perhaps I should stop posting about it. After all I've sort of moved on to longer rides now; a "mere" century cannot be that interesting, right? But today I successfully completed the Solvang Century (da da dum), with about 4,000 participants, and anyway it was a great ride so heck I'm going to post about it. So yeah I did it, riding time 5:32, which considering the wind was pretty darn good.

Here's a picture of me at the top of Ballard Canyon, with about 10 miles to go;

it is notable for the house in the distance, which is my absolute dream,

a gorgeous mansion surrounded by Pinot Noir vineyards.

Hey, everyone needs a stretch goal, right?

Next up for me is a double-double at the end of March; the Solvang Double, followed the following weekend by the Hemet Double. 400 miles in 8 days. That should keep me out of trouble :)

|

|

John Marshall on Clinton vs Obama: Thank You, May I Have Another? Not only is the bloom off the Obama rose, but the thorns are missing. I know I don't want him answering that phone at 3:00AM.

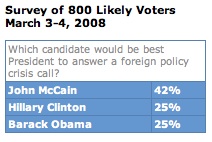

A Rasmussen poll which asked "who would you want to answer the phone", shows Clinton and Obama deadlocked at 25%. McCain won easily at 42%. A Rasmussen poll which asked "who would you want to answer the phone", shows Clinton and Obama deadlocked at 25%. McCain won easily at 42%.

In other political ad news, Ann Althouse likes John McCain's new ad. It's about time.

A great post at Maggie's Farm: "I loved that jet". "Only the Mach indicator is moving, steadily increasing in hundredths, in a rhythmic consistency similar to the long distance runner who has caught his second wind and picked up the pace... With the power of forty locomotives, we puncture the quiet African sky and continue farther south across a bleak landscape." I had the honor of meeting Kelly Johnson several times socially at his ranch. Especially remarkable about the SR-71 is how old it was; this plane was designed in 1962! And it is *still* the fastest jet ever flown. [ via Gerard Vanderleun ] A great post at Maggie's Farm: "I loved that jet". "Only the Mach indicator is moving, steadily increasing in hundredths, in a rhythmic consistency similar to the long distance runner who has caught his second wind and picked up the pace... With the power of forty locomotives, we puncture the quiet African sky and continue farther south across a bleak landscape." I had the honor of meeting Kelly Johnson several times socially at his ranch. Especially remarkable about the SR-71 is how old it was; this plane was designed in 1962! And it is *still* the fastest jet ever flown. [ via Gerard Vanderleun ]

Reader and fellow cyclist Joseph emailed me this link: Steve Jobs made me miss my flight. Ah, the perils of owning an MBA. (MacBook Air.)

Here we have a jet-powered Dodge Caravan, complete with soccer ball logo on the back. "Like us, he apparently thought the 150-hp V6 just wasn't enough and added some supplemental power via a 1,000-hp helicopter jet engine." Excellent. [ via Instapundit ]

This is excellent; xkcd posted a tribute to Gary Gygax, inventor of Dungeons and Dragons, who recently died. Er, you have to see it...

Andrew Grumet considers Life and Work. I have to agree, these are not mutually exclusive, and heavily intertwined.

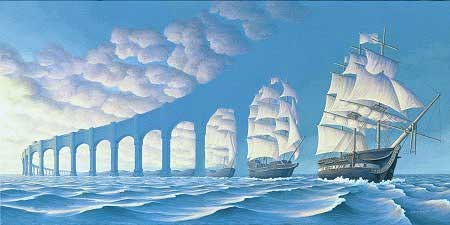

Finally: I love this picture so much, I'm going to run it again. Very thought provoking, somehow. A close friend has a mantra, "don't worry about what you can't control". And yet, I worry about everything. It may be out of my control :) Anyway looking at this picture, you realize how small and petty are the affairs of men. A lot of the stuff I worry about doesn't matter at this scale! Finally: I love this picture so much, I'm going to run it again. Very thought provoking, somehow. A close friend has a mantra, "don't worry about what you can't control". And yet, I worry about everything. It may be out of my control :) Anyway looking at this picture, you realize how small and petty are the affairs of men. A lot of the stuff I worry about doesn't matter at this scale!

|

Archive: March 8, 2007

Archive: March 1, 2006

|

From my friend Diane Simons:

A young woman was about to finish her first year of college. Like many others her age, she considered herself to be a liberal Democrat, and was in favor of the redistribution of wealth.

She was deeply ashamed that her father was a staunch Republican, a feeling she openly expressed. Based on the lectures that she had participated in, and the occasional chat with a professor, she felt that her father had for years harbored an evil, selfish desire to keep what he thought should be his.

One day she was challenging her father on his opposition to higher taxes on the rich and the addition of more government welfare programs. The self-professed objectivity proclaimed by her professors had to be the truth and she indicated so to her father. He responded by asking how she was doing in school. Taken aback, she answered rather haughtily that she had a 4.0 GPA, and let him know that it was tough to maintain, insisting that she was taking a difficult course load and was constantly studying, which left her no time to go out and party. She didn't have time for a boyfriend, and didn't really have many college friends because she spent all her time studying.

Her father listened and then asked, "How is you friend Audrey doing?" She replied, "Audrey is barely getting by. All she takes are easy classes, she never studies, and she barely has a 2.0 GPA. She is so popular on campus, college for her is a blast. She's always invited to parties, and lots of times she doesn't even show up for classes because she's too hung over."

Her father asked his daughter, "Why don't you go to the Dean's office and ask him to deduct a 1.0 off your GPA and give it to your friend who only has a 2.0. That way you will both have a 3.0 GPA and certainly that would be a fair and equal distribution of GPA."

The daughter, visibly shocked by her father's suggestion, angrily fired back, "That wouldn't be fair! I have worked really hard for my grades! I've invested a lot of time, and a lot of hard work! Audrey has done next to nothing toward her degree. She played while I worked my tail off!"

The father said, "Welcome to the Republican Party".

Perfect.

Of course, Audrey was a minority, it wasn’t her fault that she played all day and got poor grades, it was discrimination and cultural bias. So the GPA system was “rebalanced” such that Audrey got a 4.0.

P.S. The average GPA at Harvard is now 3.5. No wonder Larry Summers quit.

|

|

Tonight is going to be serious. I'm in a feisty mood. Sure there were some Apple announcements today, but there's other stuff happening too. The Ole filter makes a pass...

Can I just say, once again, publicly, how great Charles Johnson's Little Green Footballs is? Okay; it's great. I link it pretty often, but I read it every day. This is important stuff, real stuff. Check it out. Subscribe to it.

I read Daily Kos, too, I don't just read what I agree with. To me the difference in tone, the difference in logic, the difference in maturity is obvious. Your mileage may vary, but if you aren't getting news from sites like these, you aren't getting news. TV "news" is entertainment, not news. And Newspapers are going the same way, unfortunately.

I received this video from my colleague Mark Wrenn, entitled "German engineering vs. Arab technology". I have no idea if it is a real VW ad - I hope so, but I doubt it - but it sure is food for thought. When did it become commonplace that people would blow themselves up to make a point? Weird. I received this video from my colleague Mark Wrenn, entitled "German engineering vs. Arab technology". I have no idea if it is a real VW ad - I hope so, but I doubt it - but it sure is food for thought. When did it become commonplace that people would blow themselves up to make a point? Weird.

Scott Adams on Strange Laws. "There are a lot of laws that don’t make sense to me. For example, if I were king, I’d make attempted suicide punishable by death. That’s a win-win scenario." Scott is like George Carlin.

Douglas Murray: We Should Fear Holland's Silence. [ via Instapundit ] Indeed.

Gerard Vanderleun: Saddam Lied on Tape. Somewhat less reported in the MSM than Dick Cheney's hunting accident, but somewhat more important, don't you think?

Eric Raymond: Media Analysts Sound Pessimistic as Iraq Civil War Fails to Materialize. "Media analysts sounded an increasingly gloomy note today following news that a full-scale outbreak of civil war in Iraq had been averted. 'The prospects for regime change in Washington seem increasingly remote,' said one senior White House reporter who spoke on condition of anonymity." Zing.

David B on GNXP: The Evolution of Cooperation. "The existence of cooperation is one of the major problems in human evolution. Among non-human animals, cooperation is rare except among individuals who are closely related. Among humans, in contrast, it is common. The problem is to explain this in view of the temptation to 'defect' from cooperation, obtaining its benefits without its costs." I think the key to this is the evolution of intelligence, which is one reason why Unnatural Selection is such a problem. (And if you doubt this is really happening, you don't read LGF or Daily Kos.)

Bill Taylor about Paul English, writing in the NYTimes: Your call should be important to us, but it's not. I worked with Paul at Intuit, he's a smart guy. His effort Get Human is important. "This month, Mr. English transformed his righteous indignation into a full-blown crusade. He started Get Human, which he calls a grass-roots movement to 'change the face of customer service.' The accompanying Web site sets out principles for the right ways for companies to interact with customers, encourages visitors to rate their experiences, and publishes many more secret codes unearthed by members of the movement. As of last week, the ever-expanding cheat sheet offered cut-through-the-automation tips for nearly 400 companies." I'm a big believer in this; everyone at Aperio has heard me express many times that when people call us, they should immediately be able to talk to a live person.

Ann Coulter previews the Oscars. [ via Powerline ] "I shall grant my awards based on the same criteria Hollywood studio executives now use to green-light movies: political correctness. Also, judging by most of the nominees this year, the awards committee prefers movies that are wildly unpopular with audiences." It would be funnier if it wasn't so true.

Cybele on blogging.la: The Meeting of the Marys. "At Noon today, Long Beach received a royal visitor, the Queen Mary 2, here to greet the city’s own royal resident, the R.M.S. Queen Mary." Great photo... Cybele on blogging.la: The Meeting of the Marys. "At Noon today, Long Beach received a royal visitor, the Queen Mary 2, here to greet the city’s own royal resident, the R.M.S. Queen Mary." Great photo...

Congratulations to Floyd Landis, who won the inaugural Tour of California (together with his team, Phonak). By all accounts the race was a huge success. "The week of racing couldn't have ended better for AEG Sports, the sports marketing company that owns and operates the tour. With estimates of over 100,000 spectators in attendance in Redondo Beach, AEG estimates that over one million fans lined the roads of California to experience the event." Wow. I watched the stages on ESPN2, and the crowds looked like European crowds, with people ten deep all along the course. Bike racing hits the big time in the U.S. - finally. Congratulations to Floyd Landis, who won the inaugural Tour of California (together with his team, Phonak). By all accounts the race was a huge success. "The week of racing couldn't have ended better for AEG Sports, the sports marketing company that owns and operates the tour. With estimates of over 100,000 spectators in attendance in Redondo Beach, AEG estimates that over one million fans lined the roads of California to experience the event." Wow. I watched the stages on ESPN2, and the crowds looked like European crowds, with people ten deep all along the course. Bike racing hits the big time in the U.S. - finally.

This was also an interesting preview of some of the big American names in cycling; in addition to Landis, George Hincapie and Levi Leipheimer also won stages. With Lance Armstrong retired it is going to be a wide-open season of bike racing this year, with Americans among the top contenders.

So, what did you think of the Olympics? Good? Bad? Indifferent? I liked them. A lot. A lot more than I thought I would. As CNN reports Olympics ends in a Circus, as they should - it is after all entertainment. But it is unstaged entertainment; sports are the ultimate reality show. The uncertainty and finality are what makes it great. I'm looking forward to Vancouver 2010 already. So, what did you think of the Olympics? Good? Bad? Indifferent? I liked them. A lot. A lot more than I thought I would. As CNN reports Olympics ends in a Circus, as they should - it is after all entertainment. But it is unstaged entertainment; sports are the ultimate reality show. The uncertainty and finality are what makes it great. I'm looking forward to Vancouver 2010 already.

Isn't Sam Sullivan awesome? The mayor of Vancouver, he's been a quadriplegic since breaking his neck skiing when he was 19. What an inspiring guy.

Dave Winer reruns an Ole and Lena joke. I don't know Lena. Do I?

|

|

The Hubble telescope recently captured the highest resolution view of a spiral galaxy, Messier 101. So you know what that means; yep, I downloaded it and posted it for interactive viewing:

(After clicking, hit F11 to maximize your browser's window.)

Stunning, isn't it? Galactic art.

|

Archive: March 6, 2005

|

I received a long picture-laden email entitled "Cool art that will mess with your head", courtesy of my colleague Steven Hashagen. It is indeed cool art and it will indeed mess with your head. I plan to dribble it out over time. Anyway here's the first:

If anyone knows the artist please tell me; I'd love to give this proper attribution.

[ Update 1/7/14: This is The Sun Sets Sail, by Rob Gonsalves ]

|

|

NYTimes: White House approves pass for blogger. Excellent. Amazingly, the usually clueless Times even included the URL to Garrett Graff's blog, FishBowlDC. The Times, they are a changin'...

Steve Rubel: Microsoft Office marketing is stuck in the prehistoric era. "It seems to me like they're trying the same ol' stuff they did back in 1995. Where has the innovative Microsoft Office marketing machine gone? The company's army of 1200+ employee bloggers do more to market Microsoft's products/services these days than anything the corporation has done in years." I don't know, I think the dinos are cute. Paleolithic, I think. And they are a tribute to office diversity :) [ via Scoble ] Steve Rubel: Microsoft Office marketing is stuck in the prehistoric era. "It seems to me like they're trying the same ol' stuff they did back in 1995. Where has the innovative Microsoft Office marketing machine gone? The company's army of 1200+ employee bloggers do more to market Microsoft's products/services these days than anything the corporation has done in years." I don't know, I think the dinos are cute. Paleolithic, I think. And they are a tribute to office diversity :) [ via Scoble ]

Chris Anderson: The tragically neglected economics of abundance. "Although there may be near infinite selection of all media, there is still a scarcity of human attention and hours in the day. Our disposable income is limited. On some level, it's still a fixed-pie game. Offer a couch potato a million TV shows and they may end up watching no more television than they did before; just different television, better suited to them." Another great introspection about the long tail. Chris' blog is batting about 1,000, every post seems worthwhile. Subscribe to it!

Oh, and here's another: What about producers? "It's worth noting that commercial success is not the only (or even main) reason to be a Long Tail producer. Most authors write books not to get rich but to reach a readership, whether it be to enhance their academic reputation, market their consultancy, or just leave a mark on the world. The Long Tail effect may not pay their rent, but it will find them a bigger audience, and if what they're offering is really good it may be dramatically bigger."

Olivelink is person-to-person video streaming. Wow. Kind of like video podcasting. [ via Matt Haughey ]

|

Archive: March 3, 2004

|

Yeah, I'm back. Unbelievably, except for a brief post a month ago to say I was alive, I have now not posted for six weeks. But I'm back...

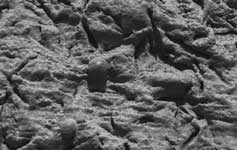

So the big big news, in an otherwise big news day, was yesterday's announcement by NASA engineers that Opportunity has found "strong evidence" that Meridiani Planum was wet. And not just damp; "Liquid water once flowed through these rocks. It changed their texture, and it changed their chemistry." To me this is not surprising, but it is amazing, on several levels.

First, if we go on to discover that Mars held (or indeed holds!) life, this will be seen as the first harbinger. That would be amazing. Unbelievably amazing, actually. But even if we don't discover such evidence, what's amazing also is our ability to detect and interpret this evidence. The Earth is roughly 5 billion years old. Homo Sapiens are roughly 150,000 years old, a mere blip. But only in the last twenty years or so have we had the technology to send robots to another planet which can probe for this kind of information, and transmit it back. That's amazing. And the geological knowledge that enables scientists to interpret this information, with confidence, is amazing as well. First, if we go on to discover that Mars held (or indeed holds!) life, this will be seen as the first harbinger. That would be amazing. Unbelievably amazing, actually. But even if we don't discover such evidence, what's amazing also is our ability to detect and interpret this evidence. The Earth is roughly 5 billion years old. Homo Sapiens are roughly 150,000 years old, a mere blip. But only in the last twenty years or so have we had the technology to send robots to another planet which can probe for this kind of information, and transmit it back. That's amazing. And the geological knowledge that enables scientists to interpret this information, with confidence, is amazing as well.

What a great time to be alive!

|

Archive: March 8, 2003

|

Just wanted to welcome Rob Smith and his Gut Rumbles to the blogroll. He hosted last week's Carnival of the Vanities, and was good enough to include me, but the reason I'm adding him is because he's funny. In kind of a crude, gut rumbly way. Check him out! P.S. Yeah, he did say I made his head hurt, but I like him anyway.

|

|

Email is one of the greatest things the computer revolution has done for personal productivity. Used improperly, it can also hurt your productivity. This article discusses ways to use email effectively. Then it goes beyond that and talks about how to be productive, period.

When Email Goes Bad

I'm not going to list all the reasons email is good. You know them already, I assume you are an avid email user. (Anyone reading this is online, and just about anyone who goes online uses email.) I'm also not going to tell you email is evil, because it isn't. The negative productivity impact of email comes from the way you use it, not the medium itself.

There are two ways email impairs your productivity:

- It breaks your concentration.

- It misleads you into inefficient problem solving.

Let's take the concentration impact first. I'm a software engineer, and programming requires extended periods of concentration. Actually this isn't unique to programming, a lot of fields require that you concentrate. (Probably just about everything worth doing requires some concentration!)

{

I maintain that programming cannot be done in less than three-hour windows. It takes three hours to spin up to speed, gather your concentration, shift into "right brain mode", and really focus on a problem. Effective programmers organize their day to have at least one three-hour window, and hopefully two or three. (This is why good programmers often work late at night. They don't get interrupted as much...)

}

One of the key attributes of email is that it queues messages. Unlike face-to-face conversation and 'phone calls, people can communicate via email without both paying attention at the same time. You pick the moments at which you pay attention to email. But many people leave their email client running continuously. This is the biggest baddest reason why email hurts your productivity. If you leave your email client running, it means anyone anytime can interrupt what you're doing. Essentially they pick the moments at which you pay attention. (Even some random spammer who is sending you a crappy ad for a get-rich scheme.) This is bad.

There are three stages to this badness. Stage one is configuring your email client to present alerts when you receive an email. Don't do this. Stage two is configuring your email client to make noise when you receive an email. Don't do this. Stage three is running your email client all the time. Don't do this, either. To be effective, you must pick the moments at which you're going to receive email. I know this goes against common wisdom. Just about everyone I know runs their client all the time, has it configured to make noise, and may even have it present alerts when an email is received. Don't do it.

Spam is the best kind of email to get, because you look at it quickly, see that it's spam, and delete it. Then you get back to work. Personal email is the second best kind of email to get, because you either respond quickly ("Hi Jane, great hearing from you. See you at the club tonight.") or set it aside for later. Task-oriented work email is the worst kind of email to get. It often requires thought, and because it is work there is some immediacy to it. But as soon as you take the time to respond, you've interrupted yourself. You've shifted back to "left brain mode", and you've lost the thread of your concentration.

This doesn't mean you shouldn't respond to emails promptly. Check email whenever you're interrupted anyway - before you start work, after a meeting, after lunch, before you go home, etc. Set aside time to do this. Just don't let others dictate the timing.

Has this ever happened to you?

[ In the hallway at work... ]

O: "Hi R, how's it going?"

R: "Great, how are you?"

O: "Good. Hey, did you see my email about the framitz?"

R: "No, I haven't checked my email yet today, sorry."

O: "WHAT!"

It has happened to me. Sometimes I can't believe it - I sent the email at 9:30, and here it is 11:30, and they haven't checked their email? What are they doing? They're being efficient, that's what. They're picking their moment to be interrupted, and that's a good thing. We'll revisit this theme again below in the Three Hour Rule. For now, here's the takeaway:

- Turn your email client off. You should pick the moment at which you'll be interrupted.

Okay, now let's look at the second productivity-sapping attribute of email, that it misleads you into inefficient problem solving. Email is a communication medium. You send messages to others, you receive messages from others. Some of these messages are mere data transmission - FYIs so you know what's going on. Some are "noise" - 'thank you's, 'I got it's, jokes, etc. And some - many - are problem solving. You hear about a problem, and you respond with a possible solution, or a possible approach, or more questions. Nothing wrong so far - email is a good medium for problem solving. And it is so easy - you get an email, you think (sometimes), and you respond. Poof, you're done.

Except when you're not. Because there are some kinds of problems which don't get solved in email, ever. And as soon as you have that kind of problem, you have to stop, immediately, before you make the problem worse.

First, never, ever, criticize someone in email. For reasons which I have never fully grasped, any negative emotion is always amplified by communication through email. Sometimes you intend to be critical - someone has done something dumb, or said something silly, or emailed something ridiculous. Resist the urge to reply. Sometimes you don't mean to be critical - you're just making an observation, or engaging in technical debate, or adding facts to a discussion. But as soon as you sense that the recipient has taken your email as criticism, you must immediately switch media - a face-to-face meeting is best, but a 'phone call is also okay.

Second, don't get into prolonged technical debates in email. I've seen threads lasting weeks with a whole series of kibitzers, with everyone restating their points of view and nothing getting settled. Often email has the effect of polarizing the debate, and the combatants end up further apart in their views than when the debate began. As soon as you sense this happening, you must immediately switch media. A meeting with the core people involved is best, but a conference call is also okay.

Both of these kinds of problems which don't get solved in email are exacerbated by copying others. The bigger the audience, the worse things get. As bad as it is to be critical in email, it is far worse if ten colleagues are copied. Often the presence of an email audience is what makes for the polarization of technical debates - if the core people were the only ones involved, they would be less virulent and more willing to acknowledge other points of view and seek compromise. Okay, so here's the takeaway:

- Never criticize anyone in email, and avoid technical debates. Use face-to-face meetings or 'phone calls instead.

Before I go on to talking about productivity in general, let me share some other thoughts about email. First, be judicious in who you send email to, and who you copy on emails. Every email recipient is going to lose a little time reading each email you send. Simple emails which say "thanks" or "got it" or "see you at the meeting" are polite and part of normal human communication. But there is a limit, no need to reply "you're welcome", or "glad you got it", or "great, I'll see you, too". In my career I've run large teams, and sometimes people in those teams copied me on virtually every email they sent. Maybe they wanted me to know what was going on, or maybe they were letting me know what a great job they were doing. Either way, they were taking my time with stuff I didn't need to spend time on. I have a high capacity for skimming email, but there is always the feeling that they didn't get it; like "why did they copy me on this?" There should be a purpose to every addressee on each email. It is okay to drop recipients from a reply - in fact, it is good; less people are involved, and [to reiterate the point] the bigger the audience, the more any implied criticism or debate will be exacerbated.

{

I have to digress for a pet peeve. I send an email to S, and S replies, copying eight other people. I reply back to S alone. S replies, again copying eight other people. This is bad. If I'm smart I will abandon email and continue the conversation with S face-to-face or over the 'phone. If I'm not smart I'll flame S so badly his hair catches fire, copying everyone, and regret it later.

}

Second, email is a very relaxed medium, but observing some formality is important. Use an email client which spell checks. Use normal capitalization. Use correct grammar - complete sentences make email easier to read just like everything else. Don't use weird background colors and strange fonts. Don't append pictures of your dog. You get the picture... I've received emails from senior people which bordered on illiterate, with incorrect capitalization, grammar, incomplete sentences, etc. The impression is not positive.

Third, email can be immediate, but don't hesitate to review and revise important emails. In many companies email has all but replaced paper memos. In many business situations email has replaced letters. When writing an email which has a wide distribution, or which affects a negotiation, or possible deal, or potential sale, take the time to write a draft, and reread it later. You can almost always improve the wording, make a point more concisely, or otherwise improve the communication.

Finally, remember that email is a public and permanent record. Email is plain text and goes out over public networks, and is often stored on servers for a long time and may be backed up for a longer time. It might feel "throwaway" at the time, but it will not be thrown away, as senior executives at Microsoft, Enron, Worldcom, and others have discovered. If you have something to say which won't bear the public light of day, it shouldn't be said in email. And if you are sending something confidential or sensitive, consider sending it as an encrypted and/or password-protected attachment.

Okay, enough about email. Here's the six rules for avoiding email tyranny:

- Turn your email client off. Pick the moment at which you'll be interrupted.

- Never criticize anyone in email, and avoid technical debates. Use face-to-face meetings or 'phone calls instead.

- Be judicious in who you send email to, and who you copy on emails.

- Observing some formality is important.

- Don't hesitate to review and revise important emails.

- Remember that email is a public and permanent record.

Got it? Cool. Thinking about email productivity led me to make some comments about productivity in general...

The Three Hour Rule

Programming is a right-brain activity. It is very conceptual and spatial and [gasp!] artistic. Effective programming requires that you transition from your body's normal "left brain" mode into a "right brain" zone. As I mentioned above, programming cannot be done in less than three-hour windows. Really. And in talking to friends in other fields, I'm convinced this applies to many other lines of work.

When you're in a three-hour zone, you've spun up to speed, gathered your concentration, shifted into "right brain mode", and are focusing on a problem. You're being productive. There are four things which can interrupt you, and you have to watch out for all of them:

- Receiving email or 'phone calls.

- Personal contact with colleagues.

- Meetings.

- Warp-offs.

Let's talk about each of these... First, emails or 'phone calls. Email we've talked about, this one is easy - just turn your email client off. Done. Most people receive far less 'phone calls than emails, so calls aren't nearly as much of a problem. The solution is the same - put your phone in "do not disturb" mode. Nowadays most everyone has a cell 'phone, leave that on, and if there is a genuine emergency your significant other or doctor or whomever will reach you there. Most calls to your desk are colleagues or customers; these are important, but as with email, you should pick the time to take them.

Second, there is personal contact with colleagues. Most companies these days can't afford for everyone to have a private office, so it is pretty easy to get interrupted. (If you have an office, close the door!) Distractions include ambient noise, questions ("Hey, do you know how to invoke a framitz?"), and other interruptions ("Hey, you want to play foosball?"). These are really important (especially foosball), but they are interruptions, and they will mess up your three-hour window. Basically you want to isolate yourself from your colleagues, just like with email and 'phone calls. To deal with ambient noise, get yourself some really good headphones and play music. Cordless, if you want. For $100 you will have the best-sounding music you can imagine, and a sure-fire way to eliminate background noise.

{

The "office vs. cubicle" debate rages and has not been settled. Some companies give every engineer their own office, and claim the productivity improvement is worth the cost. Others feel the atmosphere is better in a cubicle farm, and the interaction between engineers leads to better problem solving. Without taking a stand in this debate, the fact is that most engineers work in cubicles, and have little control over this. So it is what it is - you have to make the best of it.

In 2000 I joined PayPal, a dot-com with an egalitarian work environment where everyone had a cubicle, even the CEO. After many years of enjoying a private office, I was back in a cube. I quickly found two things to be essential, first, I positioned my desk and computer so I was not distracted by traffic (away from the cube opening), and second, I bought a great pair of cordless headphones. With these adaptations I was able to work just as productively as I had in an office. (Of course I used conference rooms for meetings.)

}

Dealing with questions and interruptions from colleagues is more difficult. The give-and-take between engineers in a team is important; often one person will have the answer to another's dilemma. There is also the social aspect, it is enjoyable to interact with your colleagues. However, you need to have those three-hour windows. I recommend a simple sign you can hang on your cube: "I'm in a zone", "Do not disturb", etc. (This is a chance to be creative...) Essentially you want your colleagues to know you're zoning. If they have a technical question which can wait, they can put it in email, or wait until you emerge. If they need immediate attention ("hey, you want to play foosball?") at least they know you were in a zone, and that they're interrupting you. Dealing with questions and interruptions from colleagues is more difficult. The give-and-take between engineers in a team is important; often one person will have the answer to another's dilemma. There is also the social aspect, it is enjoyable to interact with your colleagues. However, you need to have those three-hour windows. I recommend a simple sign you can hang on your cube: "I'm in a zone", "Do not disturb", etc. (This is a chance to be creative...) Essentially you want your colleagues to know you're zoning. If they have a technical question which can wait, they can put it in email, or wait until you emerge. If they need immediate attention ("hey, you want to play foosball?") at least they know you were in a zone, and that they're interrupting you.

Third, meetings... Ah yes. An entire book can be written about meetings, and many have. Let me make a few comments about meetings and then leave it. Meetings interrupt everyone who attends, obviously, so they are "expensive". They are also often the best way to communicate team status and to problem-solve. So there is tremendous leverage in having good meetings instead of bad ones. Each meeting should have a well-defined purpose, and the organizer should keep the meeting on track. It is good to have meetings "first thing", bordering on lunch, or at the end of the day; this way people's three-hour windows are less affected. Enough about meetings... they are what they are.

Finally, warp-offs. So, what's a "warp-off"? Well, unlike the other three kinds of interruptions, in which other people interrupt you, a "warp-off" is when you interrupt yourself. Generally this happens because you're stuck - you don't know what to do next - so you switch tasks and do something you know how to do. My favorite warp-off is surfing the Internet. Sometimes when I'm working on a tough problem, I have to force myself not to do it. Other possible warps include: reading email (!), working on "fun" stuff instead of "hard" stuff, bugging your colleagues ("foosball, anyone?"), and of course posting to your 'blog :) Keeping yourself from warping off is really tough, and gets into what motivates people and a bunch of stuff I can't really tackle here, but the main thing is to be self-aware enough to realize that you do it (everyone does), and strong enough to work on not doing it. I tend to warp when I'm stuck, so the best un-warp strategies for me are ways to un-stuck myself. These include talking to others, taking a bike ride, thinking out of the box (generally above the box - take a bigger picture view), trying to simplify the problem, and relentless application of W=UH ("if something it is too ugly or too hard, it is wrong").

{

In re: working on "fun" stuff instead of "hard" stuff, it is interesting to think about what makes some tasks fun and others hard. I think happiness comes from liking yourself, and fun things are things which make you like yourself. Tasks which are fun are therefore tasks which you know how to do, and which demonstrate your proficiency. Tasks which are hard are tasks which you don't know how to do, or which reveal a lack of expertise. There is often feedback involved - fun tasks will gain you recognition from customers or coworkers, but hard tasks may not.

When you get stuck and find yourself doing something "fun" instead of something "hard", ask yourself what makes the hard thing hard? In a perfect world each person would always be assigned tasks which they're good at, and which gain them recognition, so that everything they do is fun. The world isn't perfect, but that's the goal.

}

Okay, that's a lot of words, let's see if we can summarize. There is essentially one big rule and four guidelines:

That's it - thanks for your attention. If you have comments about any of this, I'd love to hear them; please shoot me an email. Don't worry, it won't interrupt me :)

[ Later - this article generated a terrific response - thank you! - and I have summarized the most interesting observations and comments as Tyranny Revisited... ]

[Update 3/5/09 - the antidote to the tyranny of email... ]

|

|

|